AI is no longer confined to data science teams or controlled development environments. It’s quietly spreading across infrastructure — embedded in applications, packaged into containers, and deployed across compute workloads.

The problem? Most organizations don’t know where their AI lives.

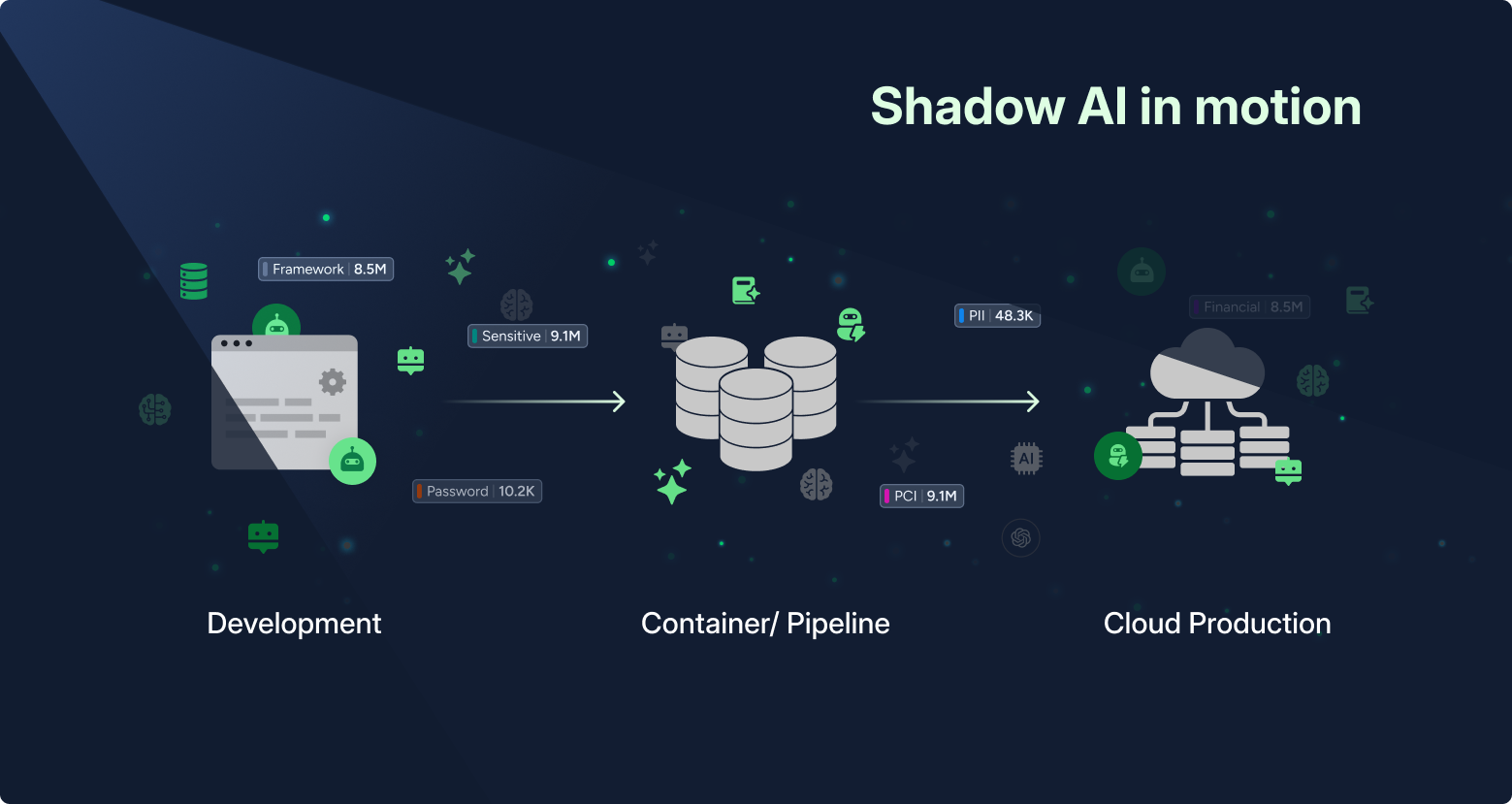

The Rise of Shadow AI in Compute

Security teams have long focused on code repositories and sanctioned AI projects. But today, AI is increasingly introduced through software packages and dependencies — often without centralized visibility or governance.

A developer adds a Python library, a container image pulls in a model runtime, a workload includes an AI framework for a one-time use case — AI is suddenly running in production without being tracked, secured or governed.

This is shadow AI in its most operational form, the workload layer, introduced through packages, dependencies and deployed components outside centralized visibility and governance.

The lack of visibility goes beyond governance. It opens a security gap. In over 90% of breaches, preventable gaps materially enable the intrusion via limited visibility, inconsistently applied controls or excessive identity trust.

When AI software packages are introduced through libraries, containers, or dependencies, they often bypass traditional security tracking. They don’t show up in AI inventories, they aren’t governed by policy, and they may never be reviewed for risk.

Why AI Software Packages Matter

Software packages are the building blocks of modern AI applications, and identifying them is critical to ensure cross-infrastructure security since they:

- Reveal hidden AI usage

AI frameworks and libraries are often the first signal that an application is leveraging AI. - Introduce component-based risk

Vulnerable or outdated packages can expose both the AI system and the broader environment, a risk often hidden in dependencies. As highlighted in the Unit 42 Global Incident Response Report, “Over 60% of vulnerabilities in cloud-native applications reside in transitive libraries.” In other words, the biggest risks often aren’t in the code you write but in the components you inherit. - Form the AI bill of materials (AI-BOM)

Understanding which packages power your AI systems is essential for governance, compliance, and supply chain security.

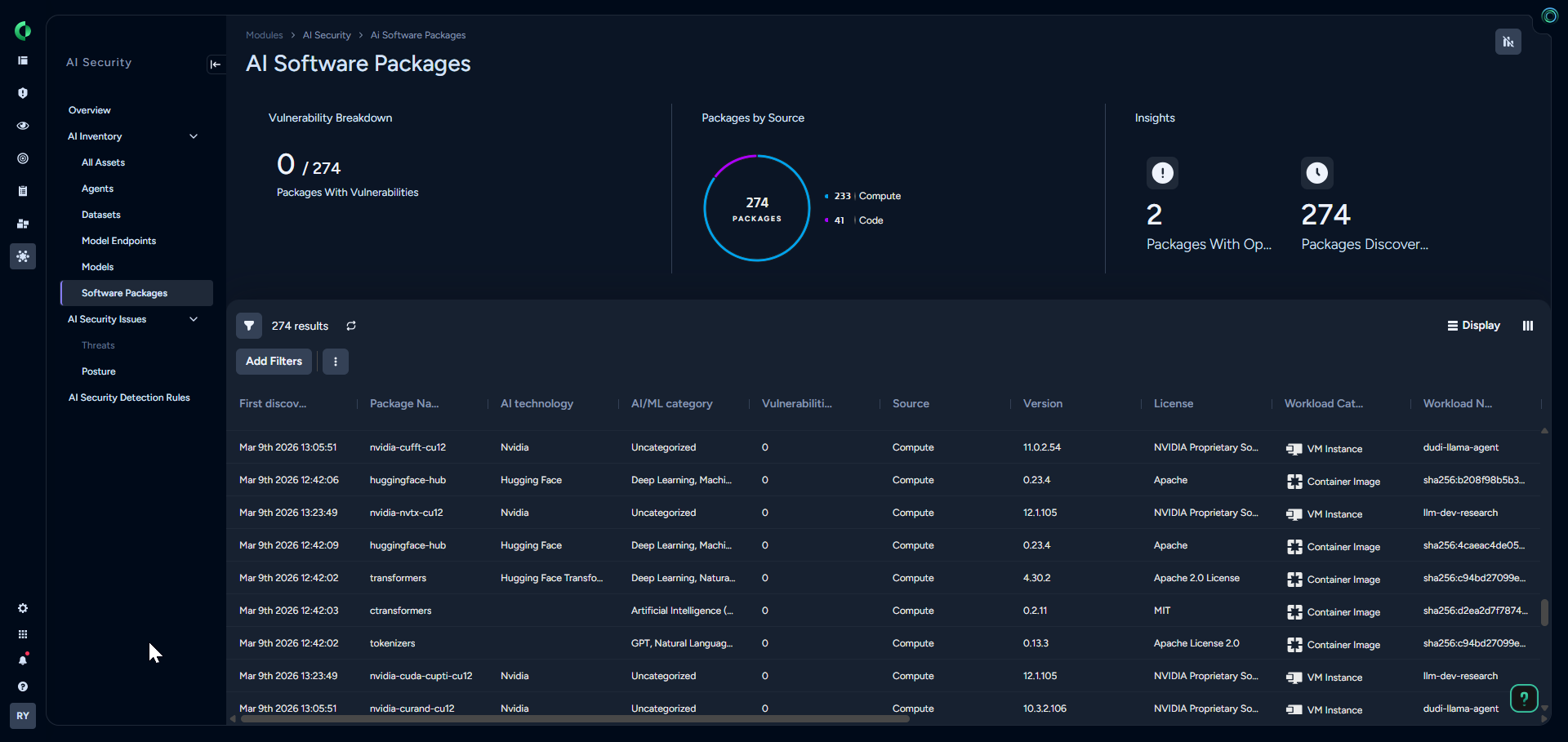

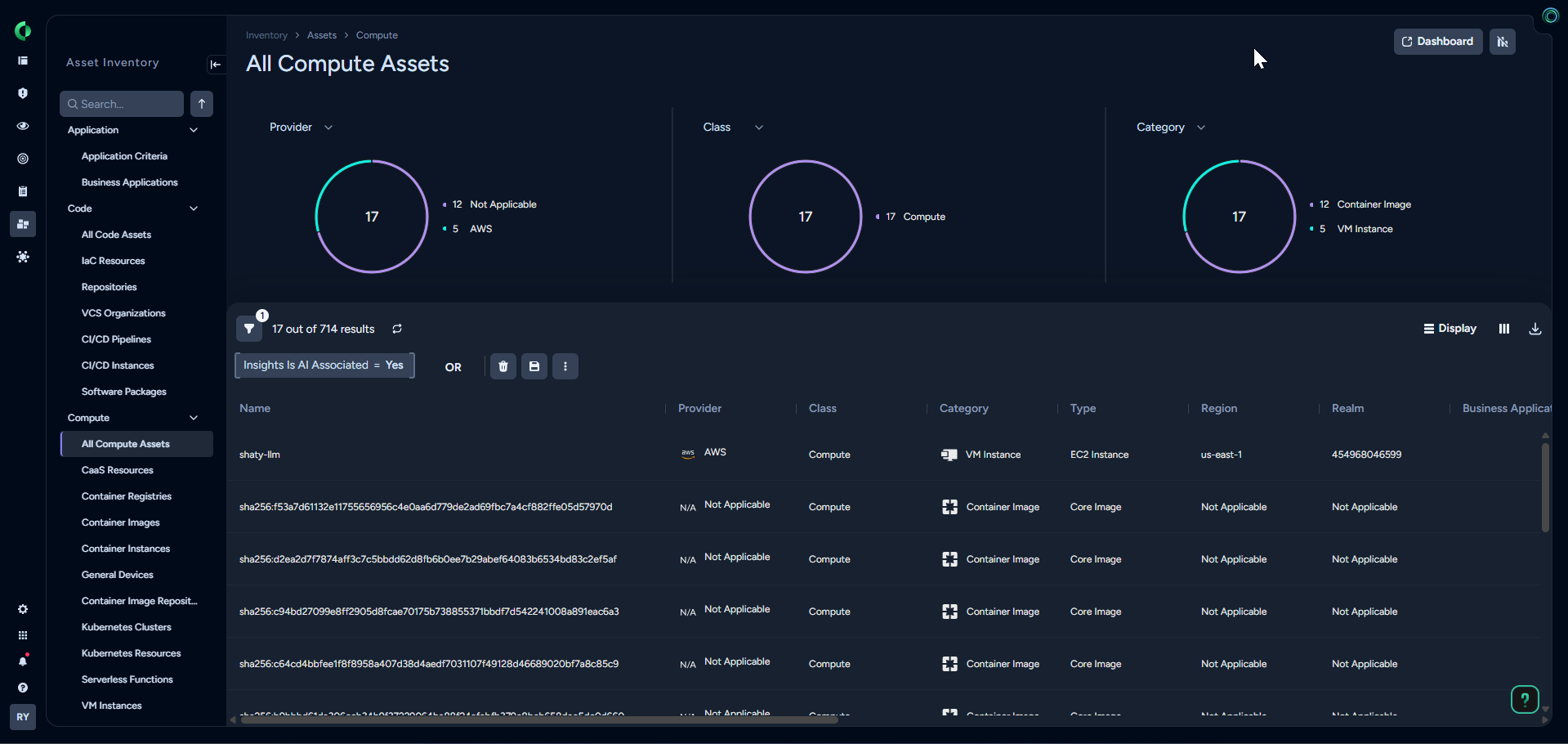

What’s New with Cortex Cloud AI-SPM: AI Software Packages on Workloads

With the upcoming April release, Cortex Cloud AI-SPM expands visibility into AI software packages and dependencies from code repositories to deployed workloads. Software Composition Analysis (SCA) identifies AI packages and dependencies in code repositories. Cloud Workload Protection (CWP) adds workload-level package visibility, revealing AI components present on deployed workloads. Together, these capabilities create a more unified AI inventory from development through runtime and extend AI-SPM with more direct insight into what AI software is present in the environment.

Where AI Is Now Visible

Security teams can now identify AI presence across a wide range of compute assets:

- VM instances with AI packages

- Running VM instances actively using AI

- VM instances with vulnerable AI packages

- Container instances with AI packages

- Container instances with vulnerable AI packages

- Images and Docker images with AI packages

- Base images containing AI components

- Base images with vulnerable AI packages

From Infrastructure Signal Detection to AI Context

What makes AI Software Packages on Workloads powerful is context. Instead of asking, What software is running on this workload?, security teams can now ask, Where is AI being used, what powers it, and what risk does it introduce?

Cortex Cloud shifts AI security from guesswork to explicit visibility.

Real-World Scenario: Hidden Risk in a Container

A platform team deploys a container image for data processing. Unknown to security:

- The image includes an open-source AI library

- The library has a known vulnerability

- The container has access to sensitive data

Without visibility into AI packages on workloads, the team simply sees another container. With Cortex Cloud AI-SPM, it becomes a high-risk AI workload with vulnerable components and access to sensitive data.

That’s the difference between infrastructure monitoring and AI-aware security.

Mapping Shadow AI Across the Lifecycle

AI doesn’t appear in just one place. It spans the application lifecycle.

| Stage | Shadow AI Signal | Risk |

| Code | AI libraries in repositories | Unreviewed AI usage |

| Build | AI packages in images | Vulnerable dependencies |

| Deploy | AI packages on workloads | Unauthorized AI execution |

| Runtime | Active AI workloads | Data exposure, data misuse |

| Operations | AI interaction with data | Compliance and governance gaps |

The lifecycle view shows how shadow AI can surface from code to operations. In practice, teams need to narrow that broad picture to the workloads that contain AI packages so they can investigate risk and act.

Why It Matters – Now

The rise of AI agents and automated workflows is accelerating the problem. Recent industry research from SACR highlights that AI is no longer just an application layer but is also an autonomous execution layer capable of accessing data, calling APIs, and executing workflows. That means:

- AI systems can act on data.

- AI agents can access resources.

- AI workflows can execute autonomously.

All of this is powered by software components that often go untracked.

Who Needs to Know?

- Security teams: Gain visibility into AI software packages and the workloads running them.

- Cloud and platform teams: Understand where AI is embedded in infrastructure.

- AI and data leaders: Ensure governance across the AI supply chain.

- Palo Alto Networks customers: Achieve parity and go beyond traditional workload visibility by explicitly mapping AI usage.

From Shadow AI to Full Control

AI is no longer something you deploy. It’s something that emerges.

By illuminating AI software packages across workloads, Cortex Cloud AI-SPM turns hidden AI usage into actionable insight:

- Discover AI wherever it runs.

- Understand the risks AI introduces.

- Govern AI across the full lifecycle.

In the age of AI, visibility starts with the smallest building block.

Learn More

Stop Shadow AI from haunting your workloads. Book a Cortex Cloud demo to see how you can gain full visibility into hidden AI packages and secure your environment from code to runtime.