The Pivot to Large-Scale Intelligence

For decades, Telecommunications Service Providers have been the central nervous system of the global economy, tasked with a singular, critical mission: connecting people.

The industry spent vast amounts of capital building networks that moved voice, then text and finally high-speed mobile data. We succeeded. According to GSMA's most recent report, there are 5.8 billion unique subscriptions. The world is connected.

But the mission is changing fast. We are no longer just moving data; we are now expected to host intelligence.

Today’s enterprises are drowning in data and desperate for AI-led capabilities to analyze and process the information. They are struggling with the immense capital costs, the scarcity of GPUs, and complex data sovereignty regulations that make public cloud options difficult for sensitive workloads.

We are no longer living in the communications age, or the internet age, or the social network era, not even in the generative AI era. We are entering the Agentic Era. In this new era, data is the raw resource, and AI agents and models are the machinery that refines it into value. The infrastructure required to do this – from massive data ingestion to complex training and high-volume real-time inference – is called the "AI Factory.”

And these AI factories are not being designed for human-speed operations, but rather for machine-speed operations.

This creates a generational opportunity for telecommunications service providers (SP). By building new (or transforming existing) data centers and edge locations into AI factories, SPs can offer hosted AI services that are high-performance, low-latency and compliant with regional requirements.

However, building an AI factory isn't just about racking GPUs. It is about realizing that an AI infrastructure presents a fundamentally new threat landscape that legacy security cannot handle. If the SP’s AI factory is compromised (if models are poisoned, identities hijacked, training data exfiltrated) the damage to reputation and national infrastructure is incalculable.

To capture the AI opportunity, service providers need more than computing power; they need a blueprint for a secure AI architecture. At Palo Alto Networks, we view the security of the AI factory as a three-tiered layer cake, requiring holistic, integrated protection from the physical infrastructure up to the AI agents themselves.

The AI Threat Model Is a Structural Shift

For service providers building AI Factories, the challenge is not simply adding another workload to the data center. AI changes the risk equation entirely. It introduces new traffic patterns, new identities and new forms of autonomy that traditional network and core security architectures were never designed to govern.

- Data Gravity Becomes Attack Surface: AI training and inference environments ingest massive volumes of data from distributed enterprise customers, partners and edge environments. This scale creates a new exposure layer. Malicious payloads, embedded model manipulation, and command-and-control traffic can hide within high-throughput AI data flows. Inspection models built for deterministic traffic patterns struggle when confronted with dynamic, AI-driven pipelines.

- Non-Human Identities at Scale: An AI Factory is more than just infrastructure; it will be populated by autonomous agents. These agents retrieve data, call APIs, invoke tools and trigger workflows across networks and cloud environments. They require elevated privileges to function. For service providers, this means managing not just subscriber identities, but fleets of machine identities operating with delegated authority.

- Agentic and Adversarial Threats: Attackers are also operationalizing AI. They probe for weaknesses faster, automate exploitation and increasingly target the AI systems themselves. Prompt injection can redirect an agent’s mission. Data poisoning can subtly degrade model integrity. Rogue agents can be manipulated to access external tools or escalate privileges. These are not traditional perimeter attacks; they are attacks on reasoning, behavior and autonomy.

For service providers offering AI-as-a-Service, the implication is clear: Securing the AI Factory requires more than network defense. It requires real-time governance of models, agents and data flows, ensuring that autonomous systems operate within defined policy boundaries while maintaining performance and scale.

The Foundation — Securing the High-Performance Infrastructure

The base of our cybersecurity stack is the physical and virtual infrastructure of the AI factory itself. This is a high-stakes environment. In a multitenant SP data center, you might have a financial institution fine-tuning a fraud detection model on one rack, and a government agency running inference on satellite imagery on the next. The barriers between these tenants must be absolute.

Foundational cybersecurity has two critical components: perimeter defense and internal segmentation.

The ML-Powered Perimeter

The front door of the AI factory must handle unprecedented throughput while performing deep inspection. Traditional firewalls, relying on static signatures, become bottlenecks and fail to catch novel threats hidden in massive data streams.

Palo Alto Networks addresses this with our flagship ML-Led Next-Generation Firewalls (NGFW). We have embedded machine learning directly into the core of the firewall. Instead of waiting for a patient zero to be identified and a signature created, our NGFWs analyze traffic patterns in real-time to identify and block unknown threats instantly. For an SP, this means you can provide the massive bandwidth required for AI data ingestion without compromising on security inspection at the edge.

Zero Trust Segmentation Inside the Factory

The perimeter is just the start. Once inside the data center, the biggest risk is the lateral movement threats and malware. If an attacker compromises a low-security tenant or a peripheral IoT device, they must not be able to jump to the sensitive GPU clusters or the model storage arrays.

In an AI factory, workloads are highly dynamic and virtualized. We provide robust segmentation across both hardware and software environments. We can enforce granular policies between virtual instances, containers and different stages of the AI pipeline (e.g., isolating training environments from inference operations). This allows a breach in one segment to be contained instantly, protecting the integrity of the entire factory.

The Engine – Securing AI Agents, Apps and Identities

The middle layer of the security stack is where the actual "work" of AI happens – the models, the LLMs, the agents. This is the newest frontier of cybersecurity and where traditional tools are most deficient.

This layer faces two distinct challenges: Protecting the integrity of the AI interaction and managing the identities of the nonhuman actors.

Securing AI Apps and Agents

As enterprises evolve from standalone LLMs to agentic AI systems that reason, call tools, access data, and take action across workflows, the challenge is no longer just what a model says; it is what an AI agent does.

How do you validate that an LLM powering your AI factory does not expose sensitive information, and that autonomous agents cannot be manipulated through jailbreak prompts, tool injection or malicious instructions? How do you prevent an AI agent from accessing unauthorized systems, escalating privileges, or executing unintended actions?

This is the role of Prisma® AIRS™ – our security and governance platform for AI agents, apps, models and data. Prisma AIRS operates directly in the execution path of AI applications and autonomous agents. It enforces policy in real time, validates agent behavior, and blocks prompt injection, model manipulation and agent hijacking before they can impact the business.

Beyond filtering outputs, Prisma AIRS governs agent communications, tool access and data flows to prevent credential leakage, mission drift and unauthorized actions. For service providers delivering AI-as-a-Service, or enterprises deploying AI agents internally, Prisma AIRS enables integrity, compliance and continuous control as intelligent systems move from experimentation into mission-critical operations.

Built in alignment with emerging standards like the OWASP Agentic Top 10 Survival Guide, Prisma AIRS operationalizes best practices to defend against real-world agentic threats.

Governing Nonhuman Identity

Perhaps the most profound shift in the AI factory is who or what is doing the work. We are rapidly moving toward ecosystems of autonomous AI Agents. These agents need to authenticate to databases, authorize API calls to other services, and access privileged information just like a human employee.

If an attacker steals the credentials of a high-privilege AI agent, they own the factory.

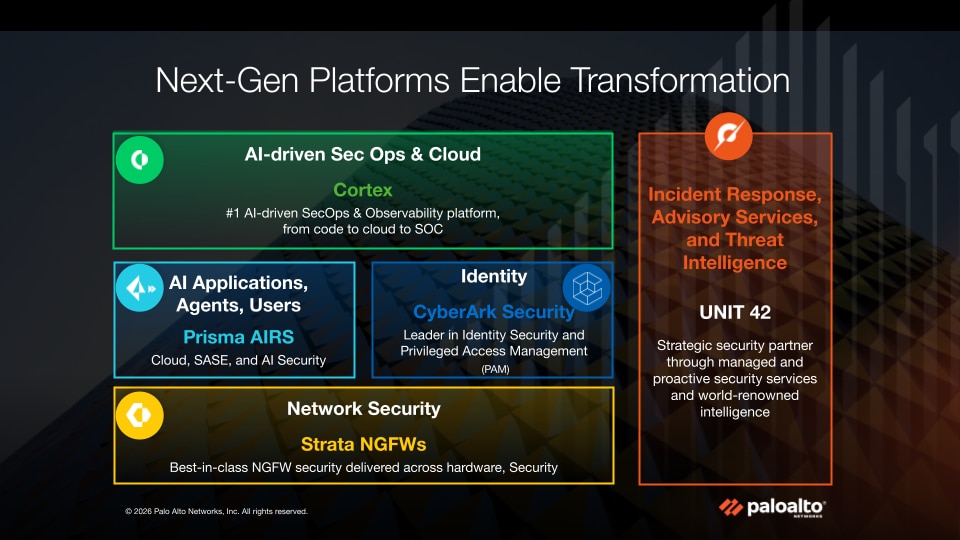

This is why the Palo Alto Networks acquisition of CyberArk, the global leader in Identity Security, is so strategic for the AI era. CyberArk specializes in protecting privileged access, and crucially managing nonhuman identities. By integrating CyberArk’s capabilities, we can ensure that every AI agent operating within the SP’s factory is robustly authenticated, authorized for minimum necessary access, and its activities are monitored. We are securing the new digital workforce.

The Overwatch – Holistic, AI-Driven Threat Management

The top layer of the stack is about visibility and speed. An AI factory generates a deafening amount of telemetry data from networks, endpoints, clouds and identity systems. No human security operations center (SOC) can sift through this noise manually to find a sophisticated attack.

To fight AI-driven threats, you need AI-driven defense.

This is the role of Cortex®, our flagship platform for holistic threat management. Cortex is designed to ingest billions of data points from across the entire Palo Alto Networks product portfolio and hundreds of types of third-party equipment, normalizing it into a single source of truth.

Cortex applies advanced AI and machine learning to this vast data lake to detect anomalies that signal a complex attack spanning different threat vectors. It might correlate an unusual login event from an AI agent (detected by the identity layer) with a subtle change in outbound traffic patterns at the firewall (layer 1), recognizing it as data exfiltration in progress.

For a Service Provider, Cortex provides the "single pane of glass" view over their entire AI factory operations, allowing them to detect, investigate and automatically respond to threats at machine speed, vastly reducing Mean Time to Respond (MTTR).

Building the Trust Foundation for the Agentic Era

The transition to becoming an AI factory is a necessary evolution for Service Providers seeking growth in the coming decade. Your ability to offer localized, sovereign, high-performance AI services will differentiate you from those who large-scale and cement your role as an indispensable partner to enterprises and governments.

But this opportunity is inextricably linked to trust. Your customers will not move their most sensitive data and IP into your AI factory unless they are certain it is secure against modern threats.

Security cannot be an afterthought bolted onto an AI infrastructure. It must be woven into the fabric of the factory, from the silicon to the software agents. By adopting a layered approach (securing the high-performance infrastructure with ML-led NGFWs, protecting models and identities with Prisma AIRS and CyberArk, while managing the entire landscape with Cortex) Service Providers can build the trusted foundations the AI era demands.

This week we’ll be at Mobile World Congress talking about our security platform for AI Factories, along with five solutions and ecosystem partners. Come see us at in Hall 4, Stand #4D55.