- 1. High Cardinality Explained

- 2. Why High Cardinality Matters in Observability

- 3. Cardinality vs. Dimensionality

- 4. How High Cardinality Happens

- 5. The Impact of High Cardinality on Observability Systems

- 6. Example: How Cardinality Multiplies

- 7. How to Reduce High Cardinality

- 8. Metrics vs. Logs vs. Traces for High-Cardinality Data

- 9. Best Practices for Managing High Cardinality

- 10. Why High Cardinality Is a Governance Problem

- 11. FAQs

- High Cardinality Explained

- Why High Cardinality Matters in Observability

- Cardinality vs. Dimensionality

- How High Cardinality Happens

- The Impact of High Cardinality on Observability Systems

- Example: How Cardinality Multiplies

- How to Reduce High Cardinality

- Metrics vs. Logs vs. Traces for High-Cardinality Data

- Best Practices for Managing High Cardinality

- Why High Cardinality Is a Governance Problem

- FAQs

What Is High Cardinality in Observability?

- High Cardinality Explained

- Why High Cardinality Matters in Observability

- Cardinality vs. Dimensionality

- How High Cardinality Happens

- The Impact of High Cardinality on Observability Systems

- Example: How Cardinality Multiplies

- How to Reduce High Cardinality

- Metrics vs. Logs vs. Traces for High-Cardinality Data

- Best Practices for Managing High Cardinality

- Why High Cardinality Is a Governance Problem

- FAQs

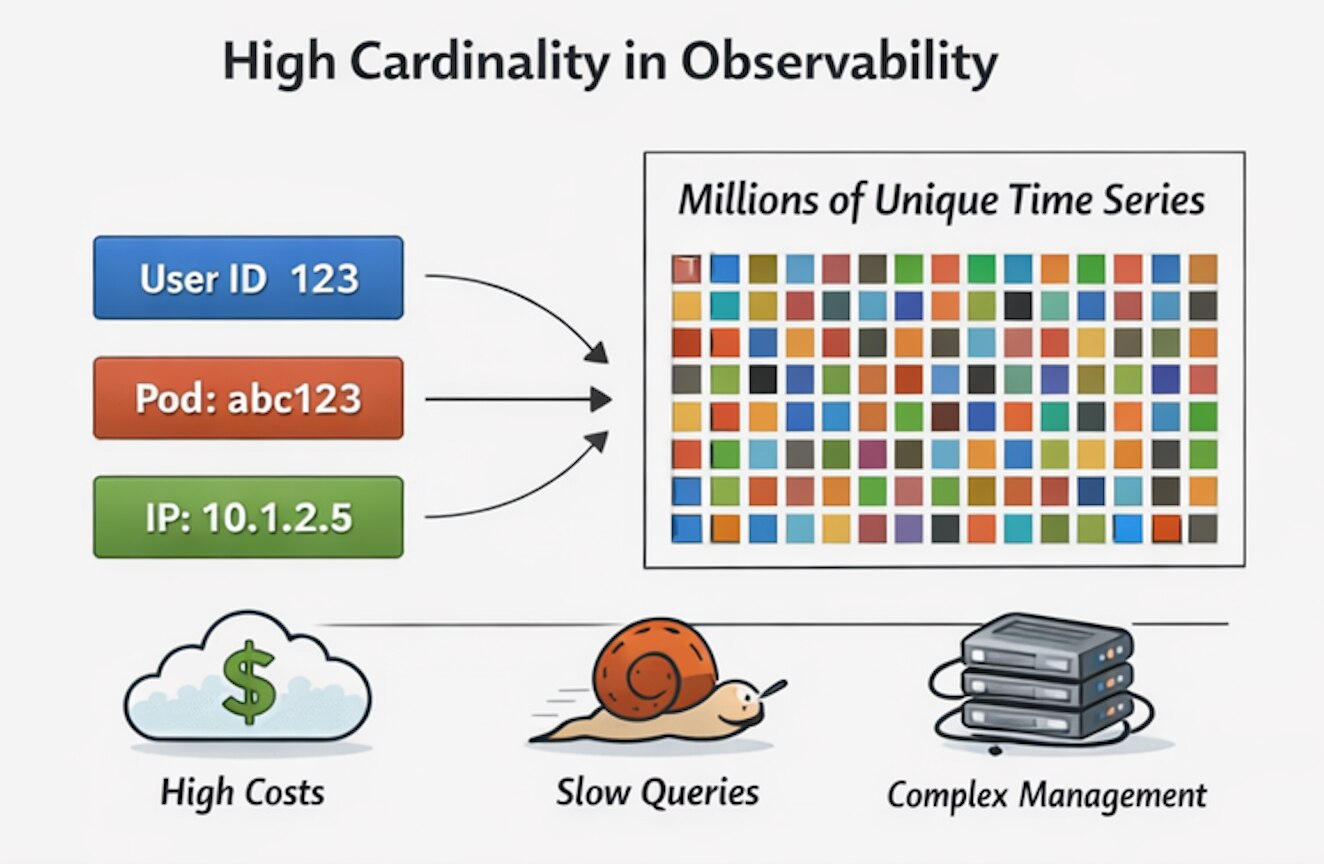

High cardinality in observability refers to telemetry data with a very large number of unique label values or label combinations. It usually happens when metrics include dynamic attributes such as user IDs, request IDs, container names, or temporary IP addresses. As those unique values multiply, observability systems become more expensive to run, slower to query, and harder to manage.

Key Points

-

High cardinality means too many unique metric combinations: It happens when metrics use labels with many changing or unbounded values. -

Cloud native systems make it worse: Kubernetes, containers, microservices, and autoscaling rapidly increase the number of time series. -

Granularity comes with a cost: More detailed telemetry can improve visibility, but it also increases ingestion, query, and storage overhead. -

Governance matters as much as tooling: Teams need standards for labels, telemetry routing, and cardinality control.

High Cardinality Explained

Observability systems depend on labels and tags to organize telemetry data and make it searchable. Those labels help teams filter metrics by dimensions such as service, host, endpoint, or region. But when engineers add dynamic or effectively unlimited values to those labels, the number of unique time series grows rapidly. That growth is what defines high cardinality.

This becomes especially problematic in modern distributed systems. Security teams, SREs, and platform engineers all need granular telemetry to troubleshoot issues, trace requests, and investigate suspicious behavior. But when that granularity relies on labels such as transaction IDs, ephemeral pod names, or temporary IP addresses, the observability backend can become overloaded with millions of unique series.

In other words, high cardinality is not just a data problem. It is a performance, cost, and operational resilience problem. In a cloud native environment, poor label design can quietly degrade the very platform teams depend on for visibility.

Why High Cardinality Matters in Observability

High cardinality directly affects how well an observability platform performs under pressure. When a system must index and query massive numbers of unique time series, dashboards slow down, alerting becomes less reliable, and incident response suffers. Observability only works when teams can trust the data to be fast, complete, and available when something breaks.

This is particularly relevant in environments built around observability, AIOps, Kubernetes, and distributed infrastructure, where telemetry volumes already grow quickly. Modern platforms need enough detail to support troubleshooting and automation, but they also need enough discipline to avoid drowning in their own data.

Cardinality vs. Dimensionality

Cardinality and dimensionality are closely related, but they are not the same thing. Dimensionality refers to the number of labels attached to a metric. Cardinality refers to the number of unique value combinations those labels produce.

A metric might include labels such as status_code, host, and endpoint. That is dimensionality. If a developer adds a label such as user_id, the dimensionality increases by only one, but the cardinality can explode because millions of unique users may now be represented in the dataset.

| Concept | Definition | Example | Operational Impact |

|---|---|---|---|

| Dimensionality | The number of labels attached to a metric | service, endpoint, region | More ways to segment and analyze data |

| Cardinality | The number of unique value combinations generated by those labels | Adding request_id or user_id | More time series, more cost, more strain on the platform |

How High Cardinality Happens

High cardinality usually starts with good intentions. Teams want more context, more precision, and faster troubleshooting. So they add more labels to their metrics. The trouble begins when those labels contain values that change constantly or have no meaningful limit.

Dynamic Identifiers in Metrics

The most common cause of high cardinality is the use of dynamic identifiers as metric labels. Examples include session IDs, request IDs, transaction hashes, unique user tokens, and one-time resource names. Each new value creates a new series, even if the underlying metric is otherwise identical.

These values may be useful for investigation, but they are usually better suited to logs or traces than metrics. Metrics are designed for aggregated numerical trends. They are not a great home for infinite variation. That is where things go sideways.

Cloud Native and Kubernetes Environments

Cloud native systems make high cardinality much more likely. Kubernetes frequently creates and destroys pods, assigns new names and IP addresses, and scales services dynamically. Microservice architectures also multiply the number of source-destination relationships teams may want to track. Every bit of that dynamism can create more unique telemetry combinations.

That is one reason cardinality problems often show up in teams working with Kubernetes and other modern cloud-native environments. These architectures are flexible by design, but they also generate a lot of short-lived identifiers that observability systems must handle carefully.

Misconfigured Integrations and Over-Collection

Third-party integrations can also introduce cardinality problems. Cloud services, security tools, and open-source collectors may emit highly granular metrics by default. If teams forward all of that raw telemetry into a central platform without filtering or normalization, cardinality rises fast. Misconfigured scrapers and aggressive defaults are common culprits.

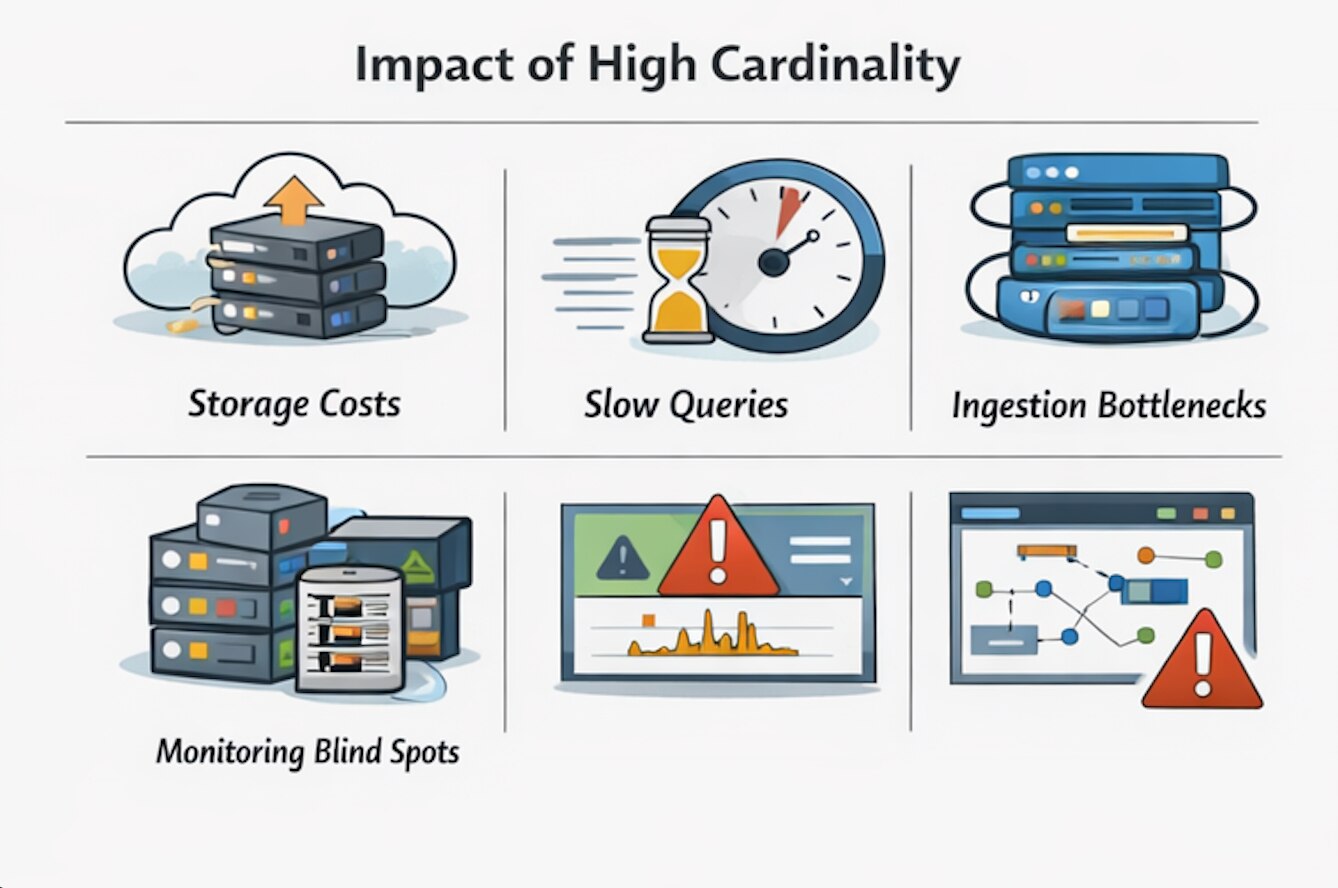

The Impact of High Cardinality on Observability Systems

Unmanaged cardinality affects observability platforms in several ways, and none of them are fun.

Higher Storage Costs

Every unique time series takes up storage space and index capacity. As cardinality grows, platforms need more RAM, more disk, and more compute to keep up. In cloud environments, that translates directly into higher bills for telemetry ingestion, storage, and processing. A sudden spike can turn a monitoring budget into a small bonfire.

Slower Query Performance

High cardinality forces query engines to search through far more indexes and series to return results. Dashboards become slower, filters become heavier, and alert queries may time out. When security and operations teams depend on real-time visibility, delayed dashboards are not just annoying. They are operationally dangerous.

Ingestion Bottlenecks

When backends cannot keep up with the volume of new series they must index, backpressure develops in the ingestion pipeline. Agents queue data, buffers fill up, and eventually telemetry gets dropped. That means teams lose visibility right when the system is under stress and visibility matters most.

Monitoring Blind Spots

Dropped metrics, delayed queries, and incomplete aggregations create blind spots during investigations. Teams may miss performance regressions, fail to detect anomalies, or struggle to reconstruct incident timelines.

In security use cases, incomplete telemetry can slow response and weaken confidence in the data. This is especially important for use cases tied to endpoint security, where telemetry quality affects detection and investigation depth.

Example: How Cardinality Multiplies

High cardinality often grows through multiplication rather than through any one obviously bad decision. A metric in a Kubernetes environment might already include labels for node, service, and endpoint. That may be manageable. But once a dynamic identifier such as request_id is added, the number of unique combinations can increase by orders of magnitude.

That is why cardinality issues often seem to appear suddenly. One extra label does not look dangerous in code review, but it can create millions of new series in production.

How to Reduce High Cardinality

The goal is not to eliminate detail, but rather to store the right detail in the right place.

Drop Unnecessary Labels at the Edge

One of the fastest ways to reduce cardinality is to strip noisy or irrelevant labels before they reach the backend. Edge collectors and telemetry pipelines can remove forbidden dimensions such as user IDs, request hashes, or temporary infrastructure identifiers before they create new time series.

Normalize Labels

Normalization reduces unnecessary variation. For example, a path such as /user/123 can be normalized to /user/{id} so that the metric reflects a stable route pattern instead of thousands of unique user-specific URLs. That simple change can dramatically reduce database load.

Aggregate and Roll Up Metrics

Aggregation and roll-up rules help preserve useful trends without storing every raw variation. Instead of tracking telemetry at the most granular possible level forever, teams can summarize metrics by service, cluster, region, or endpoint and store the aggregate for long-term analysis.

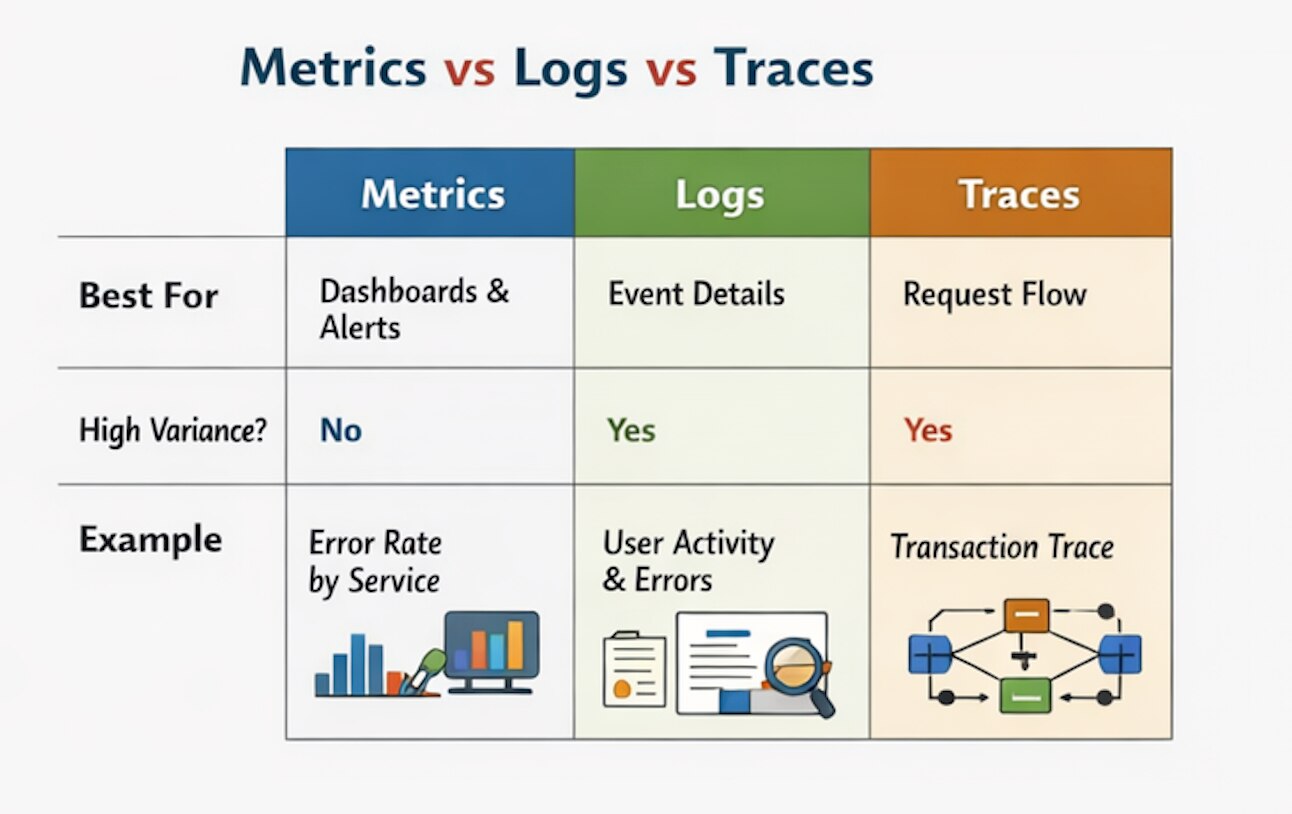

Use Logs and Traces for High-Variance Data

Metrics are best for trend analysis, alerting, and dashboards. Logs and traces are better for high-variance context such as request IDs, session values, and deep troubleshooting details. This is one of the most important design decisions in an observability strategy. Use metrics for the signal, and use logs or traces for the story behind it.

Choose Platforms Built for Telemetry Scale

Traditional relational systems are not designed for high-ingestion observability workloads. Purpose-built time-series and observability platforms are better equipped to handle dimensional data, compression, and query distribution. Even so, no platform magically fixes bad label hygiene. Tooling helps, but governance does the heavy lifting.

Metrics vs. Logs vs. Traces for High-Cardinality Data

A successful observability strategy relies on selecting the appropriate telemetry type for each task. The distinction is important because teams often overload metrics with excessive detail.

When this happens, managing dashboards and alerts becomes more difficult; a better approach is to store dynamic context in logs or traces and use links for investigation. This practice is also directly connected to AIOps, as effective automation requires rigorous telemetry discipline.

| Telemetry Type | Best For | Handles High Variance Well? | Example Use |

|---|---|---|---|

| Metrics | Dashboards, trends, alerting | No | Error rate by service or CPU by cluster |

| Logs | Detailed event records | Yes | User activity, request details, audit events |

| Traces | End-to-end request flow | Yes | Following a transaction across microservices |

Best Practices for Managing High Cardinality

Teams can reduce cardinality risk by following a few practical rules:

- Avoid dynamic identifiers in metric labels.

- Use bounded values wherever possible.

- Normalize paths and resource names before ingestion.

- Aggregate telemetry when raw granularity is not needed for alerting.

- Route high-variance context into logs and traces instead of metrics.

- Audit third-party integrations and collector defaults.

- Set governance standards for instrumentation across engineering teams.

Why High Cardinality Is a Governance Problem

High cardinality is often treated as an observability platform issue, but the root cause usually starts much earlier. It starts in instrumentation choices, naming conventions, ownership boundaries, and whether teams agree on what belongs in a metric at all. That is why governance matters.

Without shared standards, every team can add just one “helpful” label until the whole stack starts wheezing. Organizations that manage cardinality well do not simply buy bigger infrastructure; they create rules for telemetry design, review instrumentation changes, and build pipelines that enforce those standards before data reaches storage.